NVIDIA has lifted the curtain on its next-generation AI computing platform, Rubin, with CEO Jensen Huang unveiling the architecture on the CES stage as the company’s boldest step yet to meet exploding global demand for artificial intelligence. Positioned as the successor to Blackwell, Rubin is designed to power the next wave of AI training and inference at unprecedented scale. “Vera Rubin is designed to address this fundamental challenge that we have: The amount of computation necessary for AI is skyrocketing,” Huang told the audience, before confirming, “Today, I can tell you that Vera Rubin is in full production.”

The new architecture extends Nvidia’s remarkable run of hardware platforms that have transformed the company into the world’s most valuable corporation. Rubin is already lined up for deployment across major cloud providers and national supercomputing initiatives, underscoring how central Nvidia has become to the AI ecosystem. Early adopters include Anthropic, OpenAI, Amazon Web Services (AWS), HPE’s upcoming Blue Lion supercomputer, and the Doudna supercomputer at Lawrence Berkeley National Laboratory.

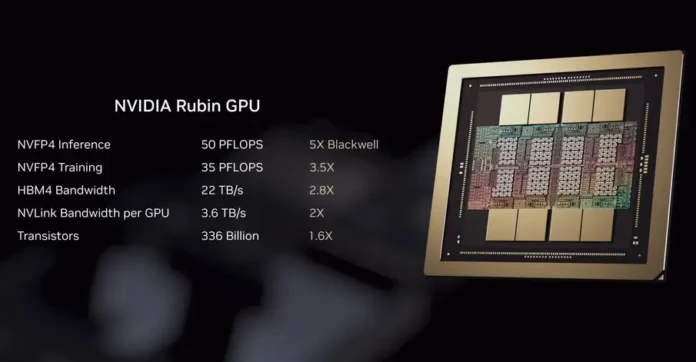

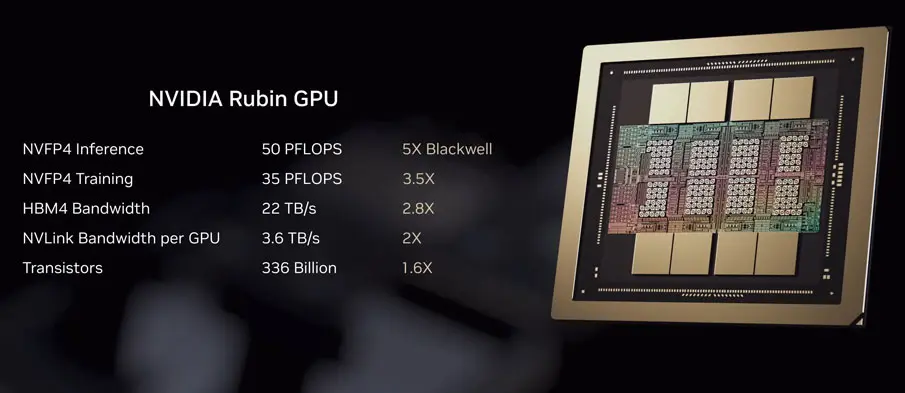

Named after pioneering astronomer Vera Florence Cooper Rubin, the platform integrates six tightly coordinated chips. At its core is the Rubin GPU, supported by next-generation BlueField networking, an upgraded NVLink interconnect, and a new Vera CPU purpose-built for agentic reasoning and long-running AI tasks. Together, these components are designed to function as a unified system rather than isolated accelerators, reflecting the growing complexity of modern AI workloads.

According to Nvidia, Rubin delivers a dramatic performance leap. The company claims it can run training workloads up to three and a half times faster than Blackwell, while inference performance improves by as much as five times. Rubin also supports eight times more inference compute per watt and can scale to 50 petaflops, making it one of the most powerful AI platforms ever announced.

Beyond raw compute, the architecture addresses mounting memory and storage bottlenecks created by advanced AI models. Nvidia senior director Dion Harris highlighted the pressure from emerging use cases, saying, “As you start to enable new types of workflows, like agentic AI or long-term tasks, that puts a lot of stress and requirements on your KV cache.” To address this, he added, “So we’ve introduced a new tier of storage that connects externally to the compute device, which allows you to scale your storage pool much more efficiently.”

As competition intensifies and trillions of dollars are expected to flow into AI infrastructure globally, Rubin arrives as Nvidia’s latest answer to the industry’s rapidly escalating demands.