Cybersecurity researchers have identified multiple critical vulnerabilities in widely used artificial intelligence frameworks LangChain and LangGraph, raising serious concerns about data security in enterprise AI deployments. The flaws, disclosed in March 2026, could allow attackers to access sensitive information including filesystem data, environment secrets, and conversation histories.

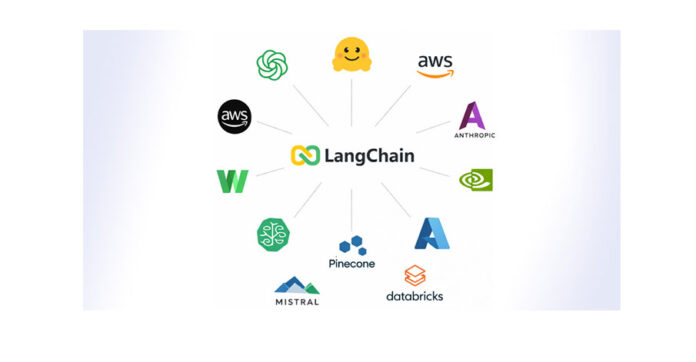

LangChain and LangGraph are popular open-source frameworks used to build applications powered by large language models (LLMs). Their widespread adoption is evident from recent statistics, which show that LangChain, LangChain-Core, and LangGraph recorded more than 52 million, 23 million, and 9 million downloads respectively in a single week. This extensive usage amplifies the potential impact of the newly discovered vulnerabilities.

Researchers revealed that the vulnerabilities provide three distinct attack paths that could be exploited independently. One flaw, tracked as CVE-2026-34070 with a CVSS score of 7.5, involves a path traversal issue that enables attackers to access arbitrary files through manipulated prompt templates. Another, CVE-2025-68664 with a critical CVSS score of 9.3, allows the leakage of API keys and environment secrets through unsafe data deserialization. The third vulnerability, CVE-2025-67644 with a score of 7.3, is an SQL injection flaw in LangGraph that could allow attackers to execute arbitrary database queries.

If successfully exploited, these vulnerabilities could enable attackers to extract highly sensitive data such as Docker configuration files, system-level secrets, and user conversation histories. Experts warn that such exposures could compromise enterprise systems, particularly those relying heavily on AI-driven workflows and automated decision-making processes.

Security researchers emphasized that the findings highlight how modern AI frameworks remain vulnerable to traditional software security issues such as path traversal, deserialization flaws, and SQL injection. The convergence of AI and conventional software architectures means that established cybersecurity risks continue to persist in newer technological ecosystems.

Patches have been released to address these vulnerabilities, with fixes available in updated versions of LangChain-Core and LangGraph components. Organizations using these frameworks are being urged to upgrade immediately and review their systems for potential exposure. The incident serves as a reminder of the importance of integrating robust security practices into the rapidly evolving field of artificial intelligence.