Cybersecurity researchers have identified new attack campaigns where threat actors are abusing AI development platforms such as Hugging Face and ClawHub to distribute malware, highlighting growing risks within the AI supply chain ecosystem.

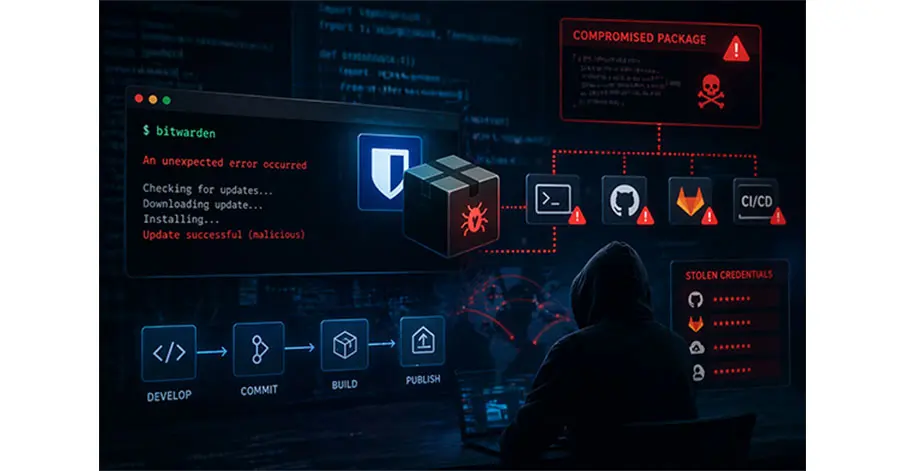

Unlike traditional platform breaches, these attacks rely heavily on social engineering. Malicious actors upload trojanized files and resources that appear legitimate, tricking users into downloading and executing hidden malicious instructions. These files are designed to run commands, fetch additional payloads, and install harmful dependencies on victim systems.

A key technique used in these campaigns is indirect prompt injection, where attackers embed concealed instructions inside files that AI systems interact with. These hidden prompts can cause AI agents to unknowingly execute malicious actions, effectively turning trusted tools into attack vectors without the user’s awareness.

On ClawHub, researchers identified nearly 600 malicious “skills” across multiple developer accounts. These were used to distribute a range of threats, including trojans, cryptominers, and information stealers targeting Windows and macOS environments. Some payloads included well-known malware such as Atomic macOS Stealer (AMOS).

Similarly, campaigns leveraging Hugging Face involved malicious repositories hosting files that triggered multi-stage infection chains. These attacks targeted multiple platforms, including Windows, Linux, and Android, delivering malware such as infostealers, loaders, and backdoors.

The findings underscore a broader concern: as AI platforms become more widely adopted for sharing code and models, they are increasingly being exploited as distribution channels for malware. Attackers are capitalizing on the inherent trust users place in these ecosystems, turning collaborative AI environments into potential entry points for large-scale cyber threats.