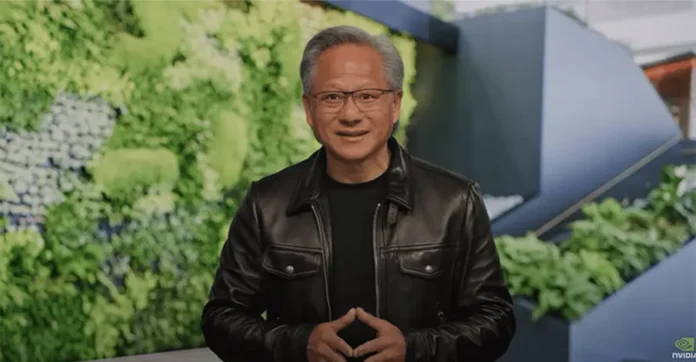

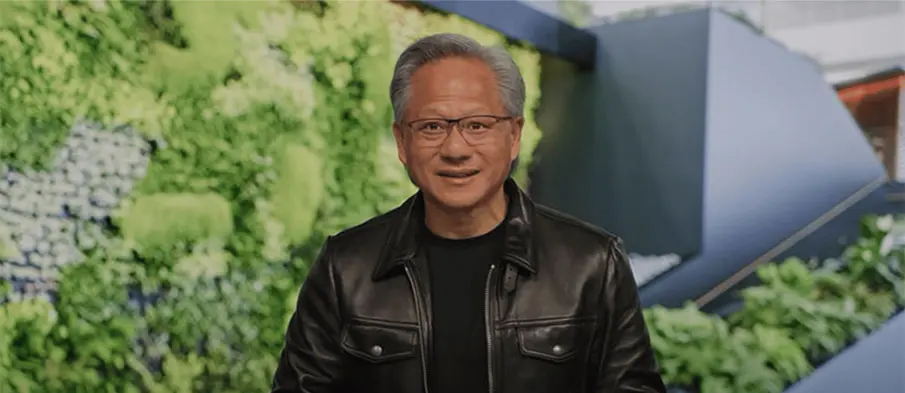

The charitable foundation established by Nvidia CEO Jensen Huang and his wife Lori Huang has committed more than $108 million worth of AI computing resources to universities and nonprofit research institutions. The donation, made through AI cloud infrastructure company CoreWeave, is aimed at supporting scientific research and artificial intelligence innovation across academic and nonprofit sectors.

According to recent filings, the foundation purchased large-scale computing time from CoreWeave and will distribute those resources to institutions conducting AI and scientific research projects. Nvidia also plans to provide free engineering support to some grant recipients, helping researchers optimize the use of advanced AI infrastructure and accelerate experimentation in fields ranging from machine learning to computational science.

The move further strengthens the growing relationship between Nvidia and CoreWeave, one of the fastest-growing AI cloud infrastructure providers in the market. Nvidia has already invested heavily in CoreWeave over the past two years, including a reported $2 billion investment earlier this year. The semiconductor giant also signed multi-billion-dollar agreements to secure cloud computing capacity from the company as demand for AI infrastructure continues rising globally.

Industry analysts believe the donation reflects how AI companies are increasingly supporting research ecosystems to drive long-term innovation and expand adoption of advanced AI technologies. Access to high-performance computing resources has become one of the biggest challenges for universities and nonprofit labs as AI models grow larger and more computationally expensive. By providing cloud-based GPU infrastructure, initiatives like this could help smaller institutions compete in advanced AI research without requiring massive in-house infrastructure investments.

At the same time, some market observers have pointed to the strategic benefits such initiatives create for companies operating within the AI infrastructure ecosystem. Nvidia designs the GPUs powering CoreWeave’s cloud systems, while CoreWeave serves as a major distribution and infrastructure partner for Nvidia’s AI hardware. Analysts note that the close relationship highlights how AI infrastructure providers, chipmakers, and cloud platforms are becoming increasingly interconnected as the industry scales rapidly.

The broader AI infrastructure market is currently experiencing explosive growth as enterprises, governments, and research organizations worldwide race to secure computing power for generative AI and large-scale machine learning systems. Companies like Nvidia and CoreWeave are at the center of this expansion, benefiting from surging global demand for GPUs, AI servers, and cloud-based computing services. Experts believe investments in AI infrastructure could continue rising sharply over the next several years as artificial intelligence becomes more deeply integrated across industries and research domains.